- Blog

- About

- Contact

- Download Kundli Software For Mac

- Download Scratch 2 For Mac

- Garena Mac Os X Download

- Era Of Celestials Download Mac

- Download Wunderlist Mac Os X

- Download Daemon Tools Crack Mac

- Djay 2 Mac Free Download

- Mac Download Folder In Dock

- Real Racing Mac Free Download

- Java 32 Bit Download Mac

- Fifa 16 Mac Os Download

- Download Epson Scan Mac Yosemite

- Mac Terminal Download Url Page

- Download Google Map Cho Mac

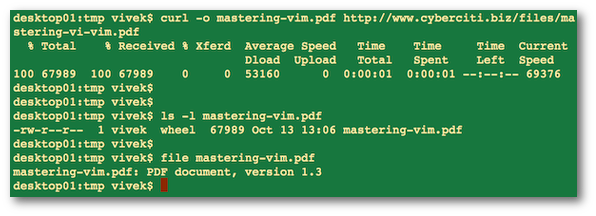

Dec 09, 2017. The Missing Package Manager for macOS (or Linux). It's all Git and Ruby underneath, so hack away with the knowledge that you can easily revert your modifications and merge upstream updates. Ranging from an app that we'd pay for to alternate (albeit sketchy) options, here are the best ways to download YouTube clips on a Mac. I often need to download files using the Terminal. However, I am unable to find the wget command on OS X. How do download files from the web via the Mac OS X bash command line option? You need to use a tool (command) called curl. It is a tool to transfer data from or to a server, using one of the following supported protocols. Global Nav Open Menu Global Nav Close Menu; Apple; Shopping Bag +. Search Support.

- Mac Terminal Download Url Page Facebook

- Mac Terminal Download From Url

- Mac Terminal Download Url Page Examples

- Mac Terminal Download File From Url

If you’ve ever wanted to download files from many different archive.org items in an automated way, here is one method to do it.

____________________________________________________________

Here’s an overview of what we’ll do:

1. Confirm or install a terminal emulator and wget

2. Create a list of archive.org item identifiers

3. Craft a wget command to download files from those identifiers

4. Run the wget command.

2. Create a list of archive.org item identifiers

3. Craft a wget command to download files from those identifiers

4. Run the wget command.

____________________________________________________________

Requirements

Required: a terminal emulator and wget installed on your computer. Below are instructions to determine if you already have these.

Recommended but not required: understanding of basic unix commands and archive.org items structure and terminology.

Recommended but not required: understanding of basic unix commands and archive.org items structure and terminology.

____________________________________________________________

Section 1. Determine if you have a terminal emulator and wget.

If not, they need to be installed (they’re free)

1. Check to see if you already have wget installed

If you already have a terminal emulator such as Terminal (Mac) or Cygwin (Windows) you can check if you have wget also installed. If you do not have them both installed go to Section 2. Here’s how to check to see if you have wget using your terminal emulator:

If you already have a terminal emulator such as Terminal (Mac) or Cygwin (Windows) you can check if you have wget also installed. If you do not have them both installed go to Section 2. Here’s how to check to see if you have wget using your terminal emulator:

1. Open Terminal (Mac) or Cygwin (Windows)

2. Type “which wget” after the $ sign

3. If you have wget the result should show what directory it’s in such as /usr/bin/wget. If you don’t have it there will be no results.

2. Type “which wget” after the $ sign

3. If you have wget the result should show what directory it’s in such as /usr/bin/wget. If you don’t have it there will be no results.

2. To install a terminal emulator and/or wget:

Windows: To install a terminal emulator along with wget please read Installing Cygwin Tutorial. Be sure to choose the wget module option when prompted.

Windows: To install a terminal emulator along with wget please read Installing Cygwin Tutorial. Be sure to choose the wget module option when prompted.

MacOSX: MacOSX comes with Terminal installed. You should find it in the Utilities folder (Applications > Utilities > Terminal). For wget, there are no official binaries of wget available for Mac OS X. Instead, you must either build wget from source code or download an unofficial binary created elsewhere. The following links may be helpful for getting a working copy of wget on Mac OSX.

Prebuilt binary for Mac OSX Lion and Snow Leopard

wget for Mac OSX leopard

Prebuilt binary for Mac OSX Lion and Snow Leopard

wget for Mac OSX leopard

Building from source for MacOSX: Skip this step if you are able to install from the above links.

To build from source, you must first Install Xcode. Once Xcode is installed there are many tutorials online to guide you through building wget from source. Such as, How to install wget on your Mac.

To build from source, you must first Install Xcode. Once Xcode is installed there are many tutorials online to guide you through building wget from source. Such as, How to install wget on your Mac.

____________________________________________________________

Section 2. Now you can use wget to download lots of files

The method for using wget to download files is:

- Generate a list of archive.org item identifiers (the tail end of the url for an archive.org item page) from which you wish to grab files.

- Create a folder (a directory) to hold the downloaded files

- Construct your wget command to retrieve the desired files

- Run the command and wait for it to finish

Mac Terminal Download Url Page Facebook

Step 1: Create a folder (directory) for your downloaded files

1. Create a folder named “Files” on your computer Desktop. This is where the downloaded where files will go. Create it the usual way by using either command-shift-n (Mac) or control-shift-n (Windows)

1. Create a folder named “Files” on your computer Desktop. This is where the downloaded where files will go. Create it the usual way by using either command-shift-n (Mac) or control-shift-n (Windows)

Mac Terminal Download From Url

Step 2: Create a file with the list of identifiers

You’ll need a text file with the list of archive.org item identifiers from which you want to download files. This file will be used by the wget to download the files.

You’ll need a text file with the list of archive.org item identifiers from which you want to download files. This file will be used by the wget to download the files.

If you already have a list of identifiers you can paste or type the identifiers into a file. There should be one identifier per line. The other option is to use the archive.org search engine to create a list based on a query. To do this we will use advanced search to create the list and then download the list in a file.

First, determine your search query using the search engine. In this example, I am looking for items in the Prelinger collection with the subject “Health and Hygiene.” There are currently 41 items that match this query. Once you’ve figured out your query:

1. Go to the advanced search page on archive.org. Use the “Advanced Search returning JSON, XML, and more.” section to create a query. Once you have a query that delivers the results you want click the back button to go back to the advanced search page.

3. Select “identifier” from the “Fields to return” list.

4. Optionally sort the results (sorting by “identifier asc” is handy for arranging them in alphabetical order.)

5. Enter the number of results from step 1 into the “Number of results” box that matches (or is higher than) the number of results your query returns.

6. Choose the “CSV format” radio button.

This image shows what the advance query would look like for our example:

3. Select “identifier” from the “Fields to return” list.

4. Optionally sort the results (sorting by “identifier asc” is handy for arranging them in alphabetical order.)

5. Enter the number of results from step 1 into the “Number of results” box that matches (or is higher than) the number of results your query returns.

6. Choose the “CSV format” radio button.

This image shows what the advance query would look like for our example:

7. Click the search button (may take a while depending on how many results you have.) An alert box will ask if you want your results – click “OK” to proceed. You’ll then see a prompt to download the “search.csv” file to your computer. The downloaded file will be in your default download location (often your Desktop or your Downloads folder).

8. Rename the “search.csv” file “itemlist.txt” (no quotes.)

9. Drag or move the itemlist.txt file into your “Files” folder that you previously created

10. Open the file in a text program such as TextEdit (Mac) or Notepad (Windows). Delete the first line of copy which reads “identifier”. Be sure you deleted the entire line and that the first line is not a blank line. Now remove all the quotes by doing a search and replace replacing the ” with nothing.

8. Rename the “search.csv” file “itemlist.txt” (no quotes.)

9. Drag or move the itemlist.txt file into your “Files” folder that you previously created

10. Open the file in a text program such as TextEdit (Mac) or Notepad (Windows). Delete the first line of copy which reads “identifier”. Be sure you deleted the entire line and that the first line is not a blank line. Now remove all the quotes by doing a search and replace replacing the ” with nothing.

The contents of the itemlist.txt file should now look like this:

…………………………………………………………………………………………………………………………

NOTE: You can use this advanced search method to create lists of thousands of identifiers, although we don’t recommend using it to retrieve more than 10,000 or so items at once (it will time out at a certain point).

………………………………………………………………………………………………………………………...

NOTE: You can use this advanced search method to create lists of thousands of identifiers, although we don’t recommend using it to retrieve more than 10,000 or so items at once (it will time out at a certain point).

………………………………………………………………………………………………………………………...

Step 3: Create a wget command

The wget command uses unix terminology. Each symbol, letter or word represents different options that the wget will execute.

The wget command uses unix terminology. Each symbol, letter or word represents different options that the wget will execute.

Below are three typical wget commands for downloading from the identifiers listed in your itemlist.txt file.

To get all files from your identifier list:

wget -r -H -nc -np -nH --cut-dirs=1 -e robots=off -l1 -i ./itemlist.txt -B 'http://archive.org/download/'If you want to only download certain file formats (in this example pdf and epub) you should include the -A option which stands for “accept”. In this example we would download the pdf and jp2 files

wget -r -H -nc -np -nH --cut-dirs=1 -A .pdf,.epub -e robots=off -l1 -i ./itemlist.txt -B 'http://archive.org/download/'To only download all files except specific formats (in this example tar and zip) you should include the -R option which stands for “reject”. In this example we would download all files except tar and zip files:

wget -r -H -nc -np -nH --cut-dirs=1 -R .tar,.zip -e robots=off -l1 -i ./itemlist.txt -B 'http://archive.org/download/'If you want to modify one of these or craft a new one you may find it easier to do it in a text editing program (TextEdit or NotePad) rather than doing it in the terminal emulator.

…………………………………………………………………………………………………………………………

NOTE: To craft a wget command for your specific needs you might need to understand the various options. It can get complicated so try to get a thorough understanding before experimenting.You can learn more about unix commands at Basic unix commands

NOTE: To craft a wget command for your specific needs you might need to understand the various options. It can get complicated so try to get a thorough understanding before experimenting.You can learn more about unix commands at Basic unix commands

An explanation of each options used in our example wget command are as follows:

-r recursive download; required in order to move from the item identifier down into its individual files-H enable spanning across hosts when doing recursive retrieving (the initial URL for the directory will be on archive.org, and the individual file locations will be on a specific datanode)-nc no clobber; if a local copy already exists of a file, don’t download it again (useful if you have to restart the wget at some point, as it avoids re-downloading all the files that were already done during the first pass)-np no parent; ensures that the recursion doesn’t climb back up the directory tree to other items (by, for instance, following the “../” link in the directory listing)-nH no host directories; when using -r, wget will create a directory tree to stick the local copies in, starting with the hostname ({datanode}.us.archive.org/), unless -nH is provided--cut-dirs=1 completes what -nH started by skipping the hostname; when saving files on the local disk (from a URL likehttp://{datanode}.us.archive.org/{drive}/items/{identifier}/{identifier}.pdf), skip the /{drive}/items/ portion of the URL, too, so that all {identifier} directories appear together in the current directory, instead of being buried several levels down in multiple {drive}/items/ directories-e robots=off archive.org datanodes contain robots.txt files telling robotic crawlers not to traverse the directory structure; in order to recurse from the directory to the individual files, we need to tell wget to ignore the robots.txt directive-i ../itemlist.txt location of input file listing all the URLs to use; “../itemlist” means the list of items should appear one level up in the directory structure, in a file called “itemlist.txt” (you can call the file anything you want, so long as you specify its actual name after -i)-B 'http://archive.org/download/' base URL; gets prepended to the text read from the -i file (this is what allows us to have just the identifiers in the itemlist file, rather than the full URL on each line)Additional options that may be needed sometimes:

Mac Terminal Download Url Page Examples

-l depth --level=depth Specify recursion maximum depth level depth. The default maximum depth is 5. This option is helpful when you are downloading items that contain external links or URL’s in either the items metadata or other text files within the item. Here’s an example command to avoid downloading external links contained in an items metadata:

wget -r -H -nc -np -nH --cut-dirs=1 -l 1 -e robots=off -i ../itemlist.txt -B 'http://archive.org/download/'Mac Terminal Download File From Url

-A -R accept-list and reject-list, either limiting the download to certain kinds of file, or excluding certain kinds of file; for instance, adding the following options to your wget command would download all files except those whose names end with _orig_jp2.tar or _jpg.pdf:

wget -r -H -nc -np -nH --cut-dirs=1 -R _orig_jp2.tar,_jpg.pdf -e robots=off -i ../itemlist.txt -B 'http://archive.org/download/'And adding the following options would download all files containing zelazny in their names, except those ending with .ps:

wget -r -H -nc -np -nH --cut-dirs=1 -A '*zelazny*' -R .ps -e robots=off -i ../itemlist.txt -B 'http://archive.org/download/'See http://www.gnu.org/software/wget/manual/html_node/Types-of-Files.html for a fuller explanation.

…………………………………………………………………………………………………………………………

…………………………………………………………………………………………………………………………

Step 4: Run the command

1. Open your terminal emulator (Terminal or Cygwin)

2. In your terminal emulator window, move into your folder/directory. To do this:

For Mac: type cd Desktop/Files

For Windows type in Cygwin after the $ cd /cygdrive/c/Users/archive/Desktop/Files

3. Hit return. You have now moved into th e”Files” folder.

4. In your terminal emulator enter or paste your wget command. If you are using on of the commands on this page be sure to copy the entire command which may be on two lines. You can just cut and paste in Mac. For Cygwin, copy the command, click the Cygwin logo in the upper left corner, select Edit then select Paste.

5. Hit return to run the command.

1. Open your terminal emulator (Terminal or Cygwin)

2. In your terminal emulator window, move into your folder/directory. To do this:

For Mac: type cd Desktop/Files

For Windows type in Cygwin after the $ cd /cygdrive/c/Users/archive/Desktop/Files

3. Hit return. You have now moved into th e”Files” folder.

4. In your terminal emulator enter or paste your wget command. If you are using on of the commands on this page be sure to copy the entire command which may be on two lines. You can just cut and paste in Mac. For Cygwin, copy the command, click the Cygwin logo in the upper left corner, select Edit then select Paste.

5. Hit return to run the command.

You will see your progress on the screen. If you have sorted your itemlist.txt alphabetically, you can estimate how far through the list you are based on the screen output. Depending on how many files you are downloading and their size, it may take quite some time for this command to finish running.

…………………………………………………………………………………………………………………………

NOTE: We strongly recommend trying this process with just ONE identifier first as a test to make sure you download the files you want before you try to download files from many items.

…………………………………………………………………………………………………………………………

NOTE: We strongly recommend trying this process with just ONE identifier first as a test to make sure you download the files you want before you try to download files from many items.

…………………………………………………………………………………………………………………………

Tips:

- You can terminate the command by pressing “control” and “c” on your keyboard simultaneously while in the terminal window.

- If your command will take a while to complete, make sure your computer is set to never sleep and turn off automatic updates.

- If you think you missed some items (e.g. due to machines being down), you can simply rerun the command after it finishes. The “no clobber” option in the command will prevent already retrieved files from being overwritten, so only missed files will be retrieved.